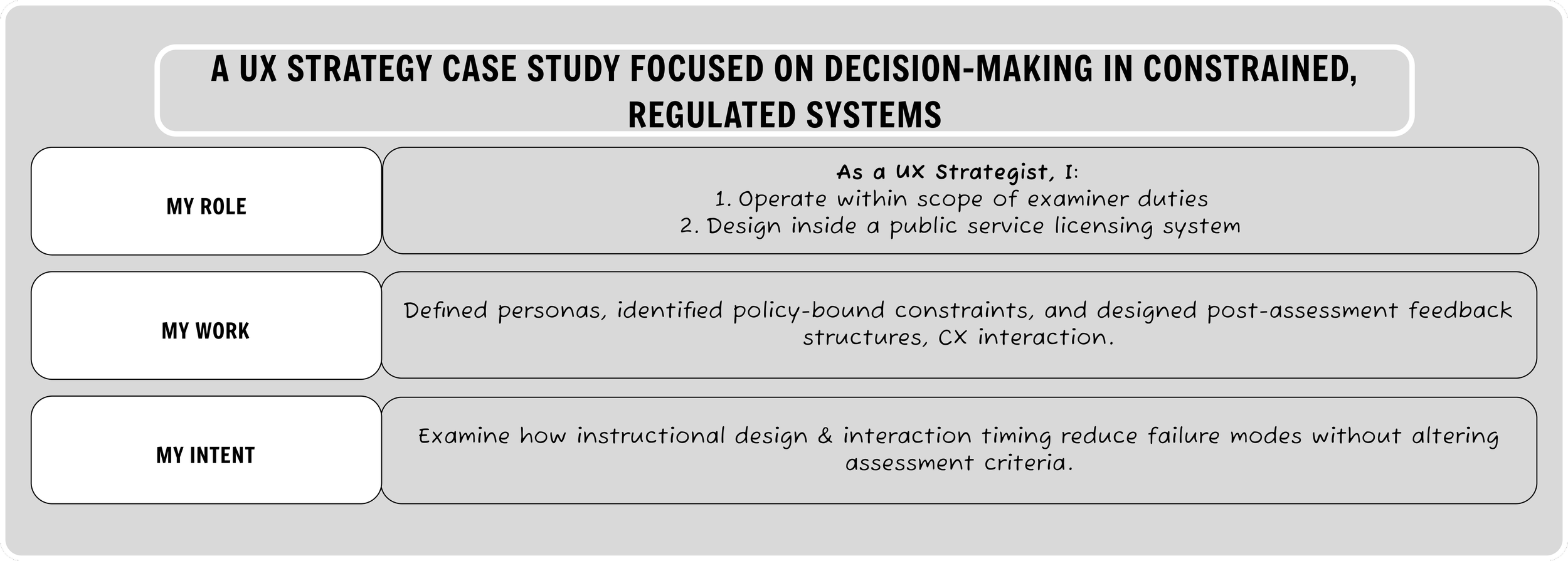

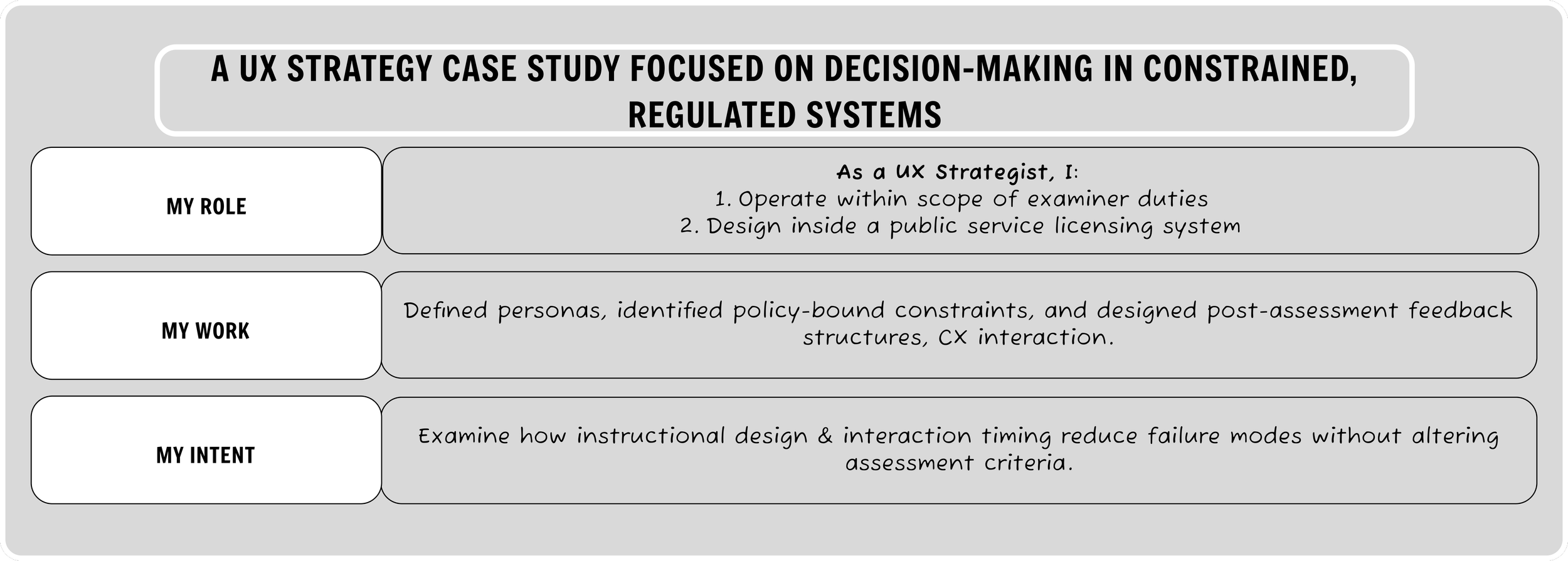

Applied UX Strategy in Fixed-Rule Systems

Making Constraint-Bound Decision Environments Legible Through Structured Design Artifacts

Problem framing

Because post-assessment feedback is a required procedural step, each debrief functioned as an embedded qualitative interview. Across ~5,000 administered licensing assessments over 2.5 years, recurring breakdown moments were informally logged, then systematized into repeatable failure archetypes.

In fixed-rule regulatory systems, failure patterns do not occur randomly. They cluster around patterned mismatches between evaluation criteria and examinee mental models.

When standards cannot change, the only viable design surface exists at the interaction layer: how those standards are communicated, sequenced, and cognitively processed within policy boundaries.

This work examines how UX strategy operates inside those constraints — translating longitudinal operational pattern recognition into structured artifacts that make decision environments legible without compromising governance integrity.

Recurring failure patterns consistently clustered around instruction timing misalignment, examinee mental model mismatches, and cognitive overload at transitional route points.

As a License Examiner within the public service system of study, I have the unique opportunity to collect qualitative data through lived longitudinal ethnographic research. Because scoring criteria were fixed by regulation, intervention was limited to communication architecture — refining instruction sequencing, pre-maneuver framing, and post-assessment clarification without altering formal standards

Microcosmic Operationalization — Parallel Parking

Context

Parallel parking mirrors the broader exam structure: instructions are delivered, demonstration occurs, and feedback follows. Because parking is an automatic-fail component and repeat attempts are common, it provides a contained environment where instructional strategy impact becomes immediately observable.

Friction Observed

Across thousands of administered exams, recurring friction clustered around:

Underestimation of scoring depth for parallel parking

Confusion between a corrective maneuver and a full parking attempt

Cognitive reset difficulty when transitioning from knowledge of controls to the parking phase

Delayed realization of misunderstanding after instruction delivery

Strategic Intervention

Without altering any procedural language, procedural integrity or scoring standards, I reframed instruction delivery by:

Orienting examinees to the parking phase as a distinct cognitive segment

Introducing the instruction as “three things to understand about parallel parking”

Clarifying attempt structure vs. corrective maneuver allowances

Preemptively addressing common false assumptions

This preserved regulatory integrity while restructuring the communication architecture.

Methods

Longitudinal pattern recognition across ~5,000 assessments

Informal pattern logging through dual-purposed end-of-exam feedback dialogue

Iterative refinement of instruction sequencing and framing

Real-time confirmation through examinee comprehension signals and reduced clarification requests

Observed Outcomes

Reduced frequently asked clarification questions after instruction delivery

Increased first-attempt comprehension among repeat examinees

Observable alignment between feedback framing and second-attempt success patterns

Lowered cognitive load during maneuver execution without altering scoring criteria

Over time, clarification questions reduced noticeably. Repeat examinees referenced structured framing unprompted.

Edge Case: When an examinee fails on a third attempt despite engaging with the framing intervention, the post-exam feedback structure provides a reusable mental model that has repeatedly surfaced in subsequent successful attempts.

This approach generalizes to other fixed-rule systems where evaluation standards are immutable but interaction architecture determines user comprehension and compliance outcomes.

Failure Archetypes: Common mismatched mental models

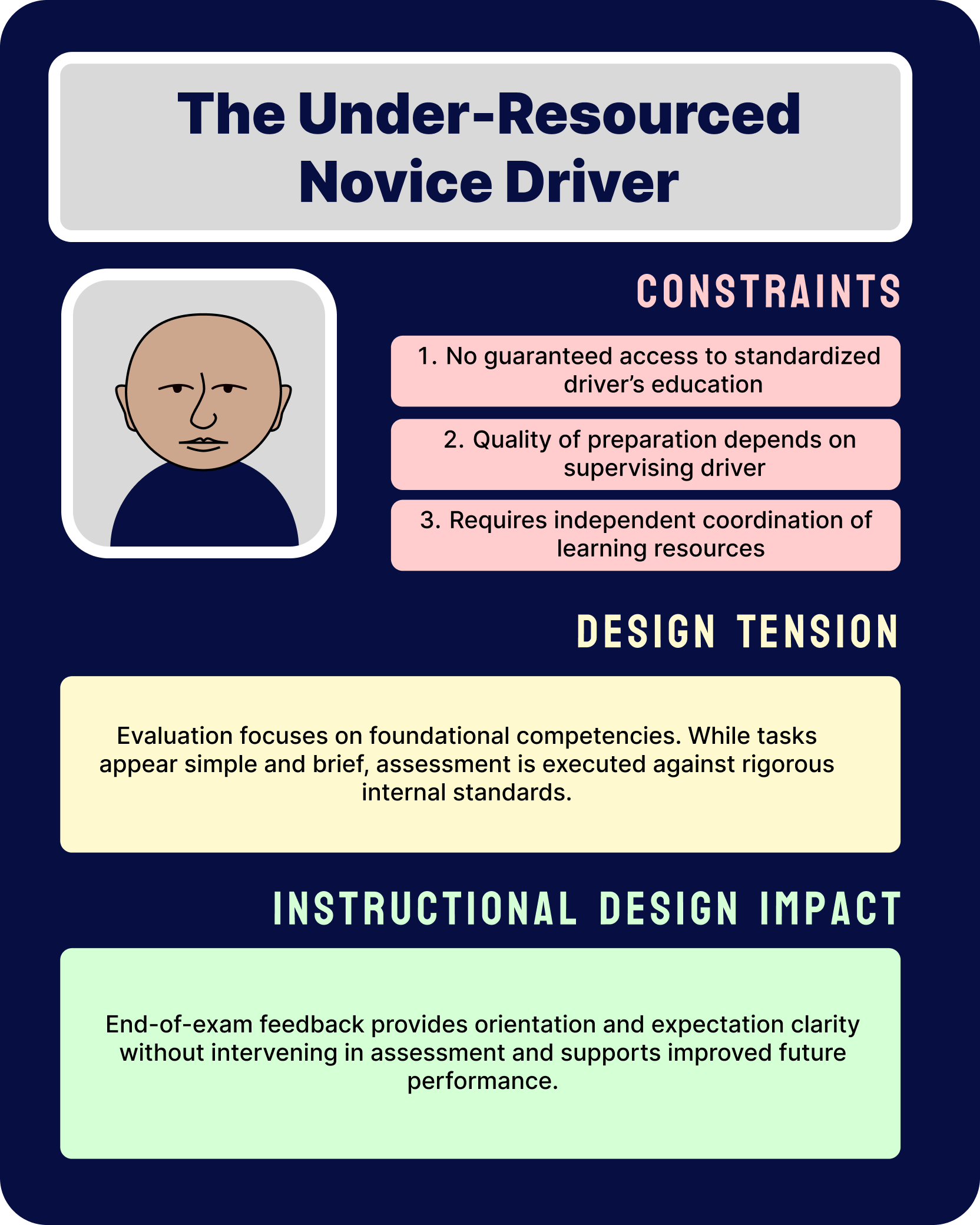

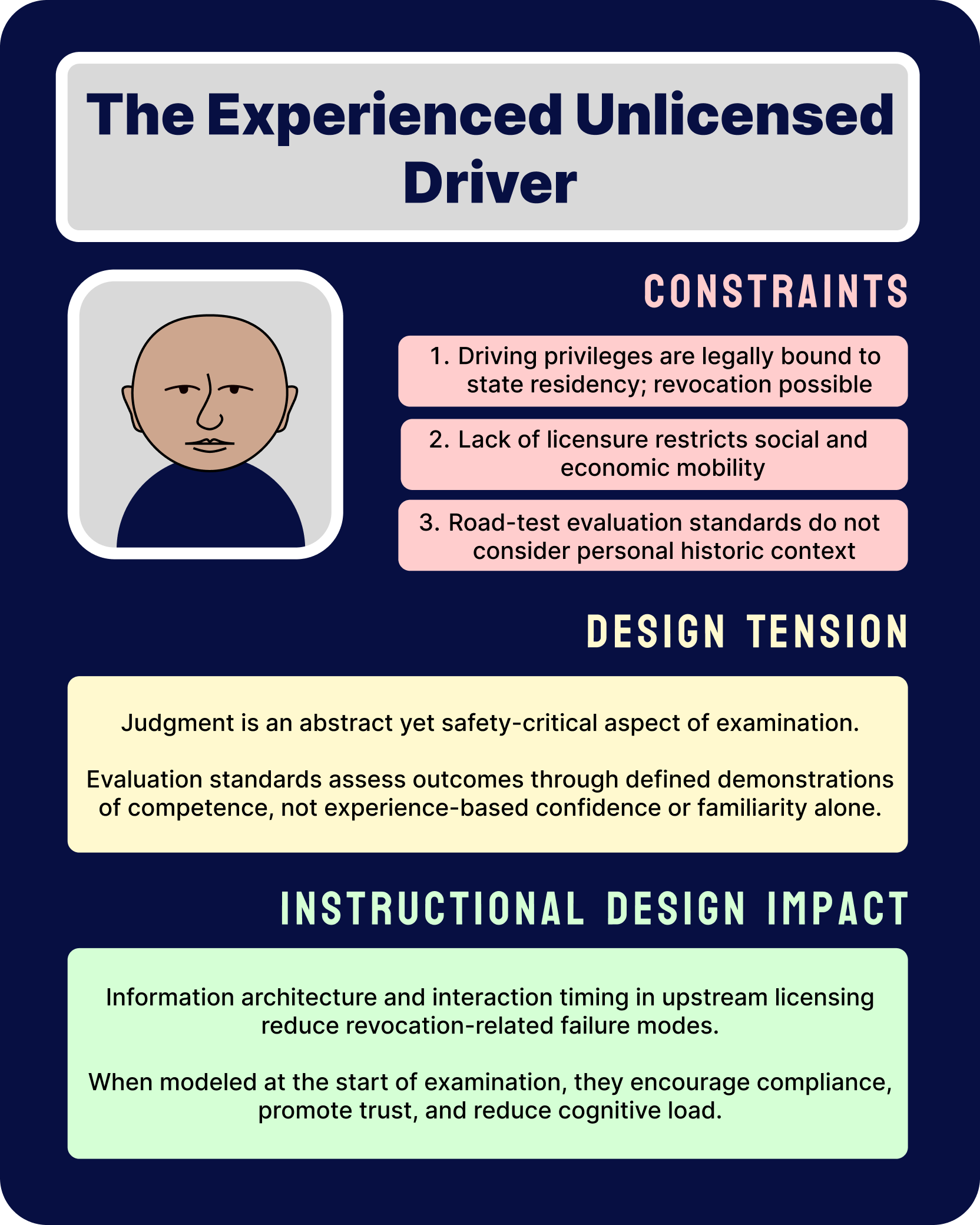

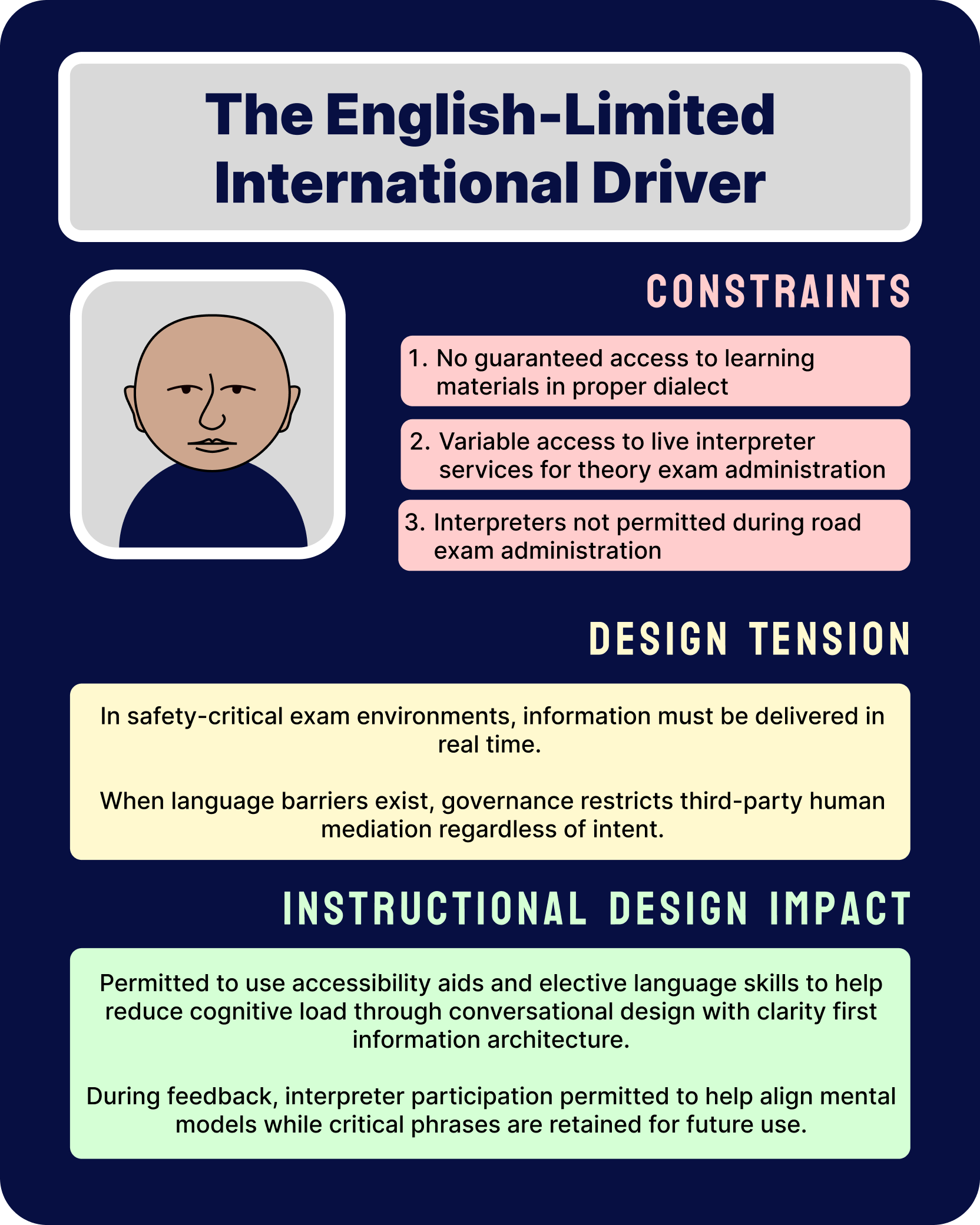

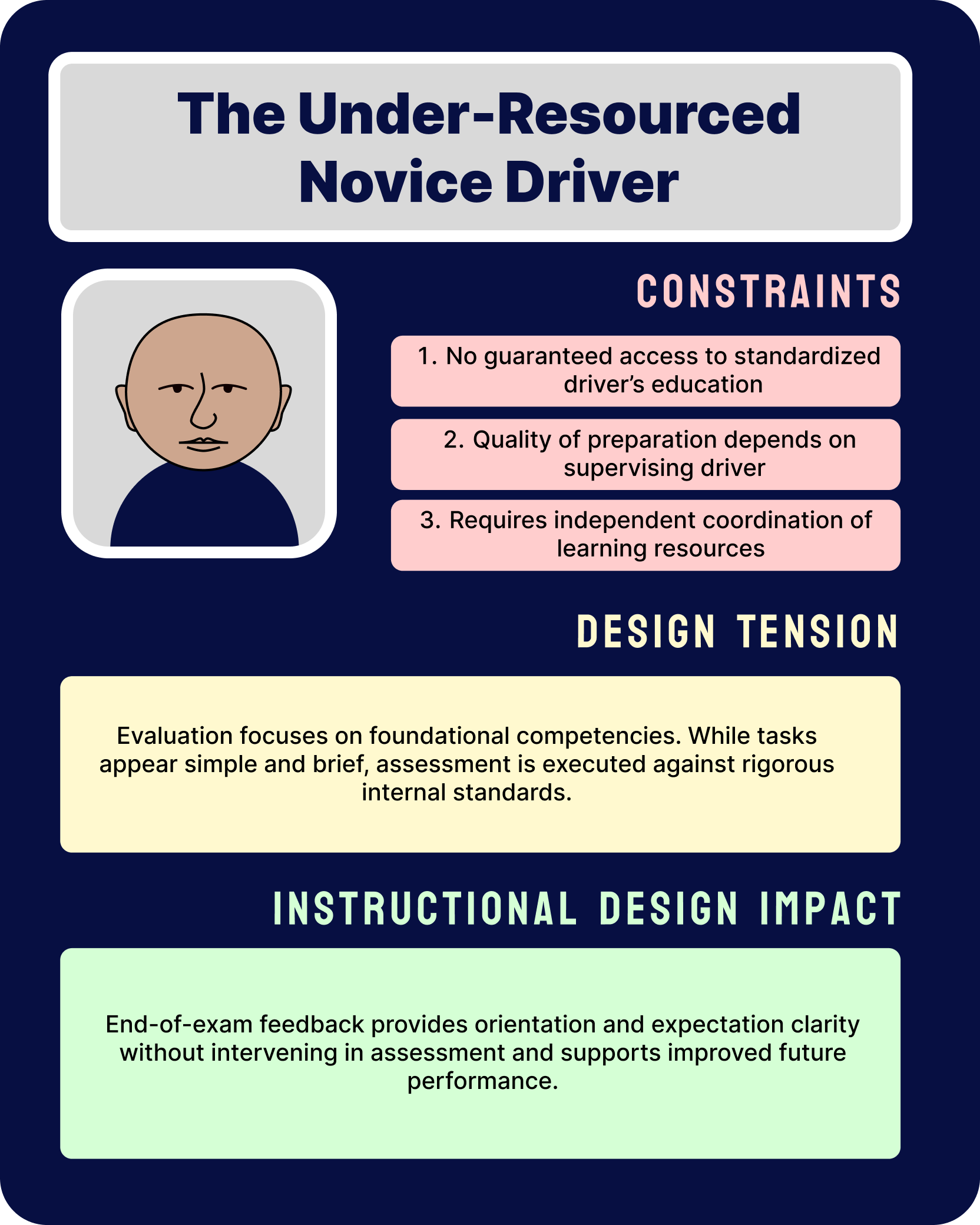

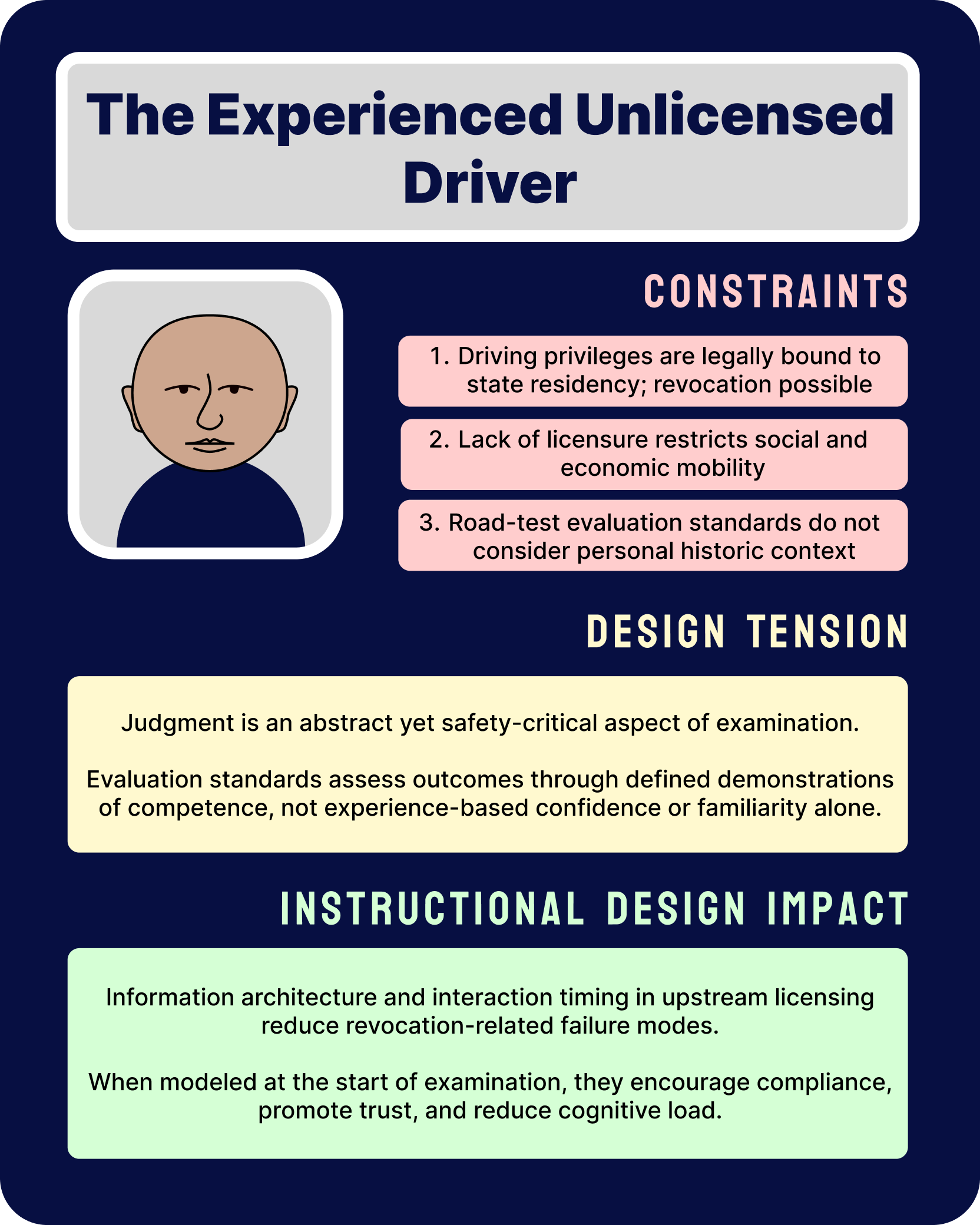

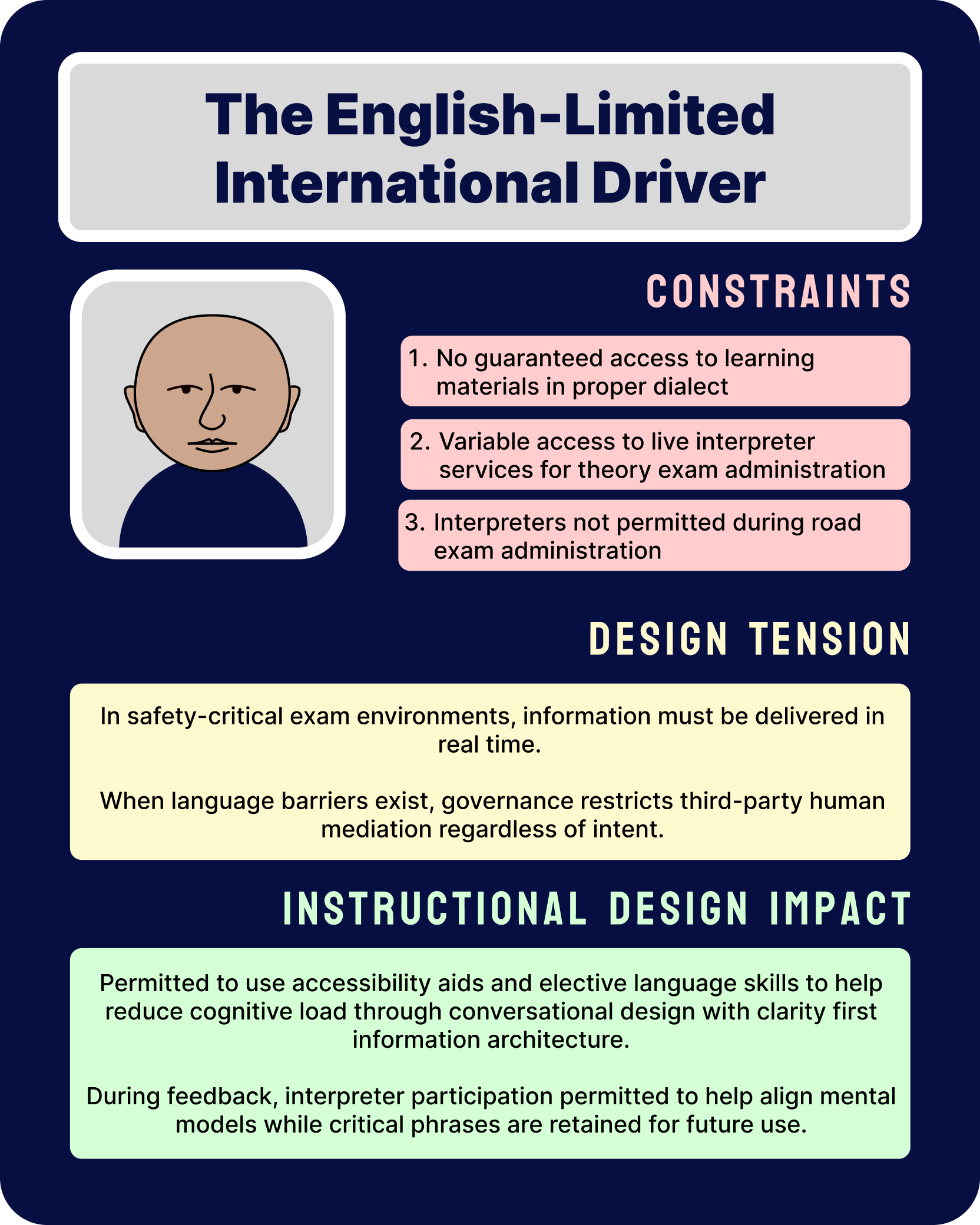

Rather than treating failures as isolated incidents, I synthesized recurring breakdown patterns into structured failure archetypes.

These artifacts map constraint-bound interaction points, externalize categories of frequently mismatched mental models, and clarify where lifecycle-level UX strategy can operate inside fixed governance standards.

By synthesizing recurring failure modes into archetypes, I was able to apply differentiated interaction design strategies at distinct points in the examination lifecycle:

Post-assessment feedback structuring for under-resourced novices to support future performance without intervening in evaluation

Upstream expectation modeling for experienced but unlicensed drivers to recalibrate overconfidence before assessment

Real-time clarity architecture for English-limited drivers to reduce cognitive overload under safety-critical constraints

These archetypes are not character judgments. They map constraint-bound decision environments, surfacing where regulatory rigor and human cognition misalign.

These artifacts translate recurring failure archetypes into structured design interventions that operate strictly within regulatory boundaries.

Each intervention addresses a cognitive or sequencing mismatch without altering formal scoring criteria.

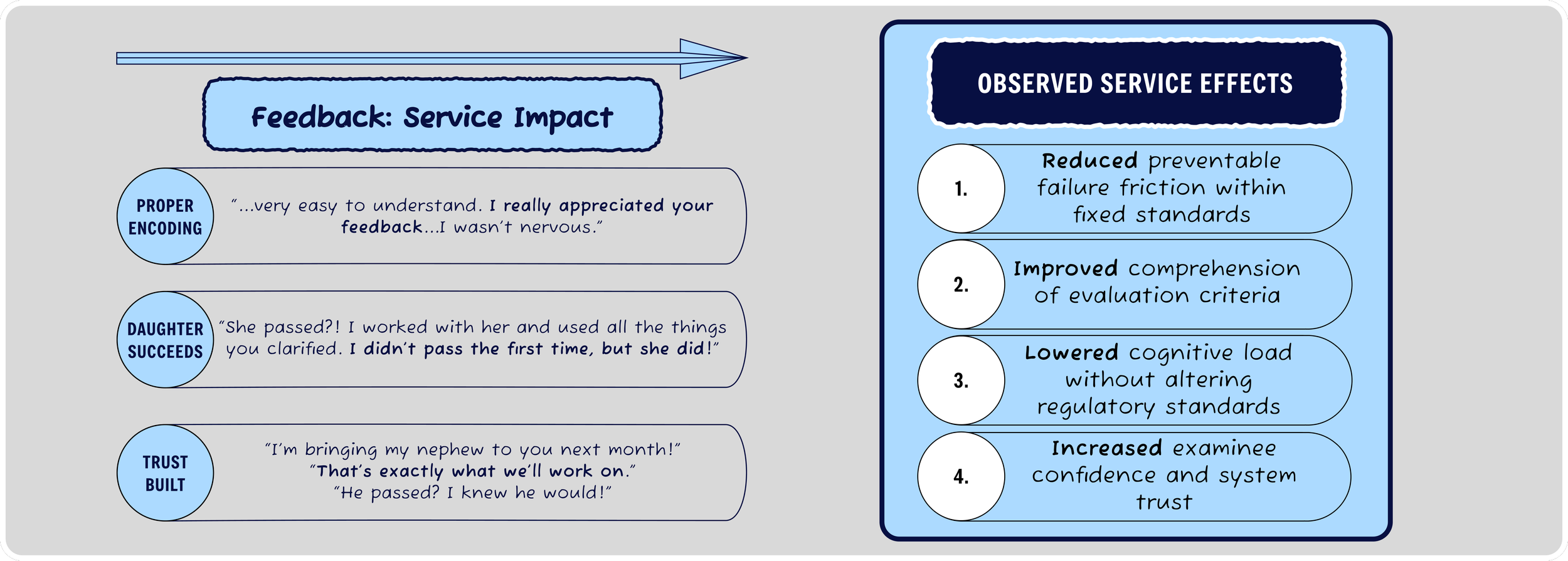

Outcome Synthesis: Longitudinal Service Impact Patterns

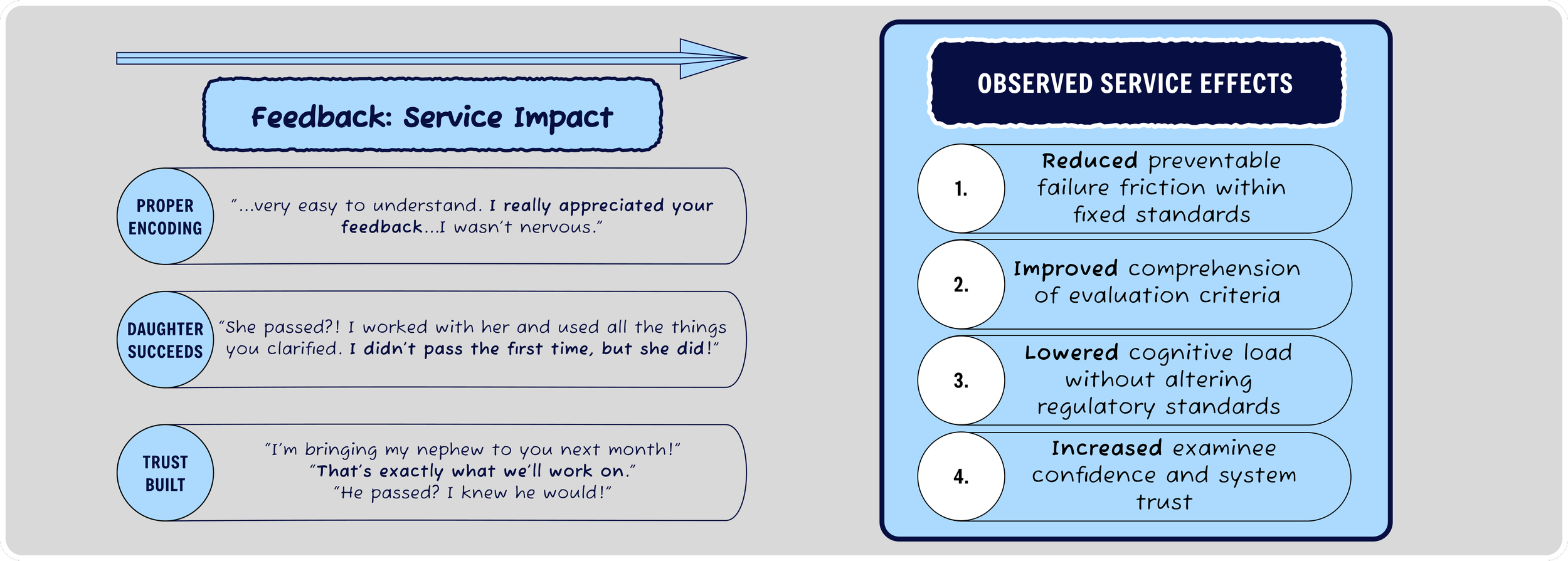

Through three years of longitudinal qualitative observation & strategy implementation, I applied targeted recalibration of information architecture, instruction sequencing, and feedback structure across the examination lifecycle.

Across archetypes, these differentiated interaction strategies resulted in:

Reduced preventable breakdowns at predictable interaction points

Improved alignment between examinee mental models and evaluation criteria

Lower cognitive load in safety-critical moments

Increased system trust through transparent expectation modeling and feedback clarity

These outcomes were achieved without altering regulatory standards; by operating exclusively at the interaction and communication layer.

Over 3,000 administered safety-critical exams across more than 2.5 years, I observed reduced communication loops prompted by system users frustrated with outcomes stemming from the failure archetypes studied here.

I received substantial real time user feedback while operating in this live service system which directly map to reduced cognitive load, increased user self-confidence, and increased system trust — in response to the strategies discussed in this case study.

For more details reach out or explore: My Currivulum Vitae (CV), Full Resume, or navigate to the live Resume page here on LMIT Designs.